我们在使用Hive的时候肯定遇到过建立了一张分区表,然后手动(比如使用 cp 或者 mv )将分区数据拷贝到刚刚新建的表作为数据初始化的手段;但是对于分区表我们需要在hive里面手动将刚刚初始化的数据分区加入到hive里面,这样才能供我们查询使用,我们一般会想到使用 alter table add partition 命令手动添加分区,但是如果初始化的分区太多,这样一条一条地手动添加分区不免过于麻烦(虽然我们可以写个脚本生成添加分区的命令)。今天我将给大家介绍的命令可以只使用一次即可添加全部的分区,如下:

MSCK REPAIR TABLE table_name;

运行上面的命令后,Hive会检测HDFS目录下存在但表的metastore中不存在的partition元信息,然后更新到metastore中。官方文档对其的描述如下:

Hive stores a list of partitions for each table in its metastore. If, however, new partitions are directly added to HDFS (say by using

hadoop fs -putcommand), the metastore (and hence Hive) will not be aware of these partitions unless the user runsALTER TABLE table_name ADD PARTITIONcommands on each of the newly added partitions.

However, users can run a metastore check command with the repair table option:MSCK REPAIR TABLE table_name;which will add metadata about partitions to the Hive metastore for partitions for which such metadata doesn't already exist. In other words, it will add any partitions that exist on HDFS but not in metastore to the metastore. See HIVE-874 for more details. When there is a large number of untracked partitions, there is a provision to run

MSCK REPAIR TABLEbatch wise to avoid OOME. By giving the configured batch size for the propertyhive.msck.repair.batch.sizeit can run in the batches internally. The default value of the property is zero, it means it will execute all the partitions at once.

The equivalent command on Amazon Elastic MapReduce (EMR)'s version of Hive is:ALTER TABLE table_name RECOVER PARTITIONS;Starting with Hive 1.3, MSCK will throw exceptions if directories with disallowed characters in partition values are found on HDFS. Use

hive.msck.path.validationsetting on the client to alter this behavior; "skip" will simply skip the directories. "ignore" will try to create partitions anyway (old behavior). This may or may not work.

使用

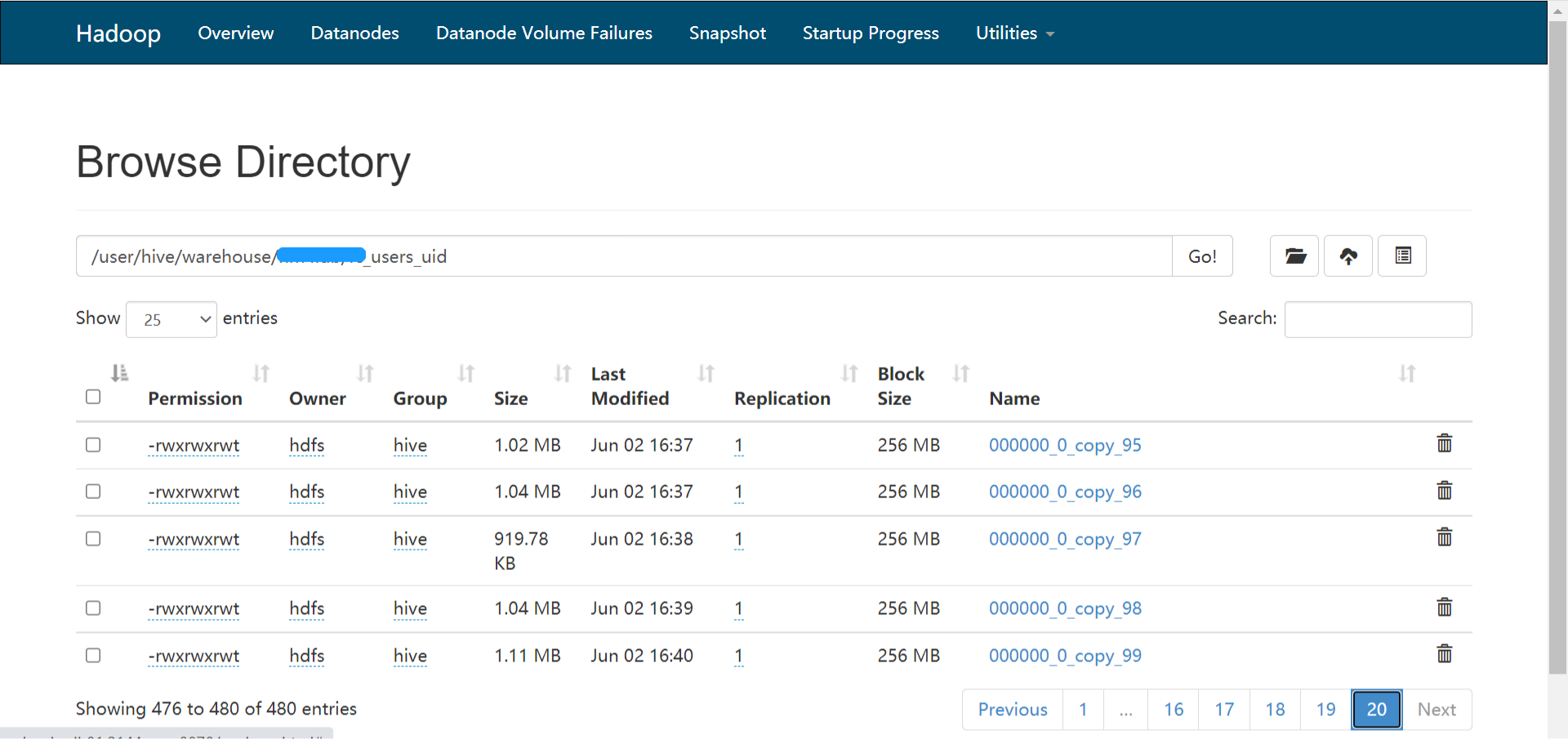

下面我来介绍如何使用这个命令,我新建了名为 temp.iteblog_hadoop 的表,然后手动 mv 了几个目录的数据到这个表数据目录下,这几个手动添加的目录在hive中目前肯定无法被查询到,所以我可以使用下面的命令来识别这些分区:

hive> show partitions temp.iteblog_hadoop; OK Time taken: 1.491 seconds hive> MSCK REPAIR TABLE temp.iteblog_hadoop; 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 11595 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 23861 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 784358 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 2396891 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 2234631 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 36621058 17/02/21 16:07:15 WARN log: Updating partition stats fast for: iteblog_hadoop 17/02/21 16:07:15 WARN log: Updated size to 21093250 OK Partitions not in metastore: iteblog_hadoop:dt=2017-02-15 iteblog_hadoop:dt=2017-02-16 iteblog_hadoop:dt=2017-02-17 iteblog_hadoop:dt=2017-02-18 iteblog_hadoop:dt=2017-02-19 iteblog_hadoop:dt=2017-02-20 iteblog_hadoop:dt=2017-02-21 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-15 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-16 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-17 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-18 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-19 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-20 Repair: Added partition to metastore temp.iteblog_hadoop:dt=2017-02-21 Time taken: 0.575 seconds, Fetched 8 row(s)

从上面的命令可以看出,已经将所有的分区加入到Hive的metastore了,是不是很方便啊。

注意

为了让 MSCK 命令工作,分区的目录名必须是 /partition_name=partition_value/结构的,否则将无法添加分区。这时候你必须使用add partition命令了。

原创文章版权归过往记忆大数据(过往记忆)所有,未经许可不得转载。

本文链接: 【Hive分区修复命令MSCK介绍与使用】(https://www.iteblog.com/archives/2035.html)